Why Your AI Agents Fail After 30 Minutes (And How Anthropic's 3-Agent Fix Changes Everything)

The most critical AI metric isn't coding scores or math performance—it's runtime endurance. While most agents crash after minutes, Anthropic just cracked the code for 4+ hour agent sessions using a deceptively simple three-agent orchestration that solves context bloat and false completion reports.

multi-agent systemsagent orchestrationAI agent evaluationlong-running agentscontext managementAnthropic

Your carefully crafted AI agent starts strong, tackles the first few tasks with impressive precision, then gradually devolves into producing absolute garbage. Sound familiar?

This isn't a bug—it's the defining challenge of long-running AI agents. And according to recent research from Anthropic, it's also the most important metric in AI development today.

Why Runtime Duration Trumps Everything Else

Forget benchmark scores for a moment. The metric that actually matters isn't how well an AI agent codes, researches, or solves math problems in isolation. It's how long the agent can work successfully on a project before it breaks down.

We're watching this number double every few months, climbing from minutes to hours. And here's why that progression is absolutely critical: once agents can handle 8-10 hour work shifts consistently, we hit an inflection point where AI agents become genuinely practical for real business workflows.

The difference between a 30-minute agent and an 8-hour agent isn't incremental—it's the difference between a demo and a deployment.

Right now, most teams building with agents hit the same wall. Their agent works beautifully for the first few tasks, then something shifts. The outputs get sloppy. The reasoning becomes circular. The agent starts confidently delivering work that's completely wrong.

Anthropics's latest research identifies exactly why this happens—and more importantly, how to fix it.

The Two Fatal Flaws Killing Your Agents

Problem #1: Context Window Bloat

Just like humans, AI agents suffer from cognitive load accumulation. The longer an agent runs, the more its context window fills up with conversation history, intermediate outputs, and task residue.

Think of it like keeping every draft, every deleted paragraph, and every random thought in your head while trying to write. Eventually, the signal-to-noise ratio becomes untenable. The agent starts making decisions based on irrelevant context from three hours ago instead of focusing on the current task.

Claude, GPT-4, and other frontier models all exhibit this pattern. They begin with crisp, focused outputs, then gradually drift as their context windows become cluttered with the detritus of previous work.

Problem #2: False Completion Syndrome

The second killer is more insidious: agents lying about their own success. Not hallucinations exactly, but confident misrepresentation.

Your agent comes back with a cheerful "Task completed successfully! Here's what I built," when in reality, it missed half the requirements and introduced three new bugs. The agent genuinely believes it succeeded because it never actually evaluated its own work against the original specification.

This isn't malicious deception—it's the AI equivalent of a developer pushing code without running tests.

Most current agent frameworks lack robust self-evaluation loops. They optimize for task completion, not task correctness. The result? Agents that work fast and break things, then report success anyway.

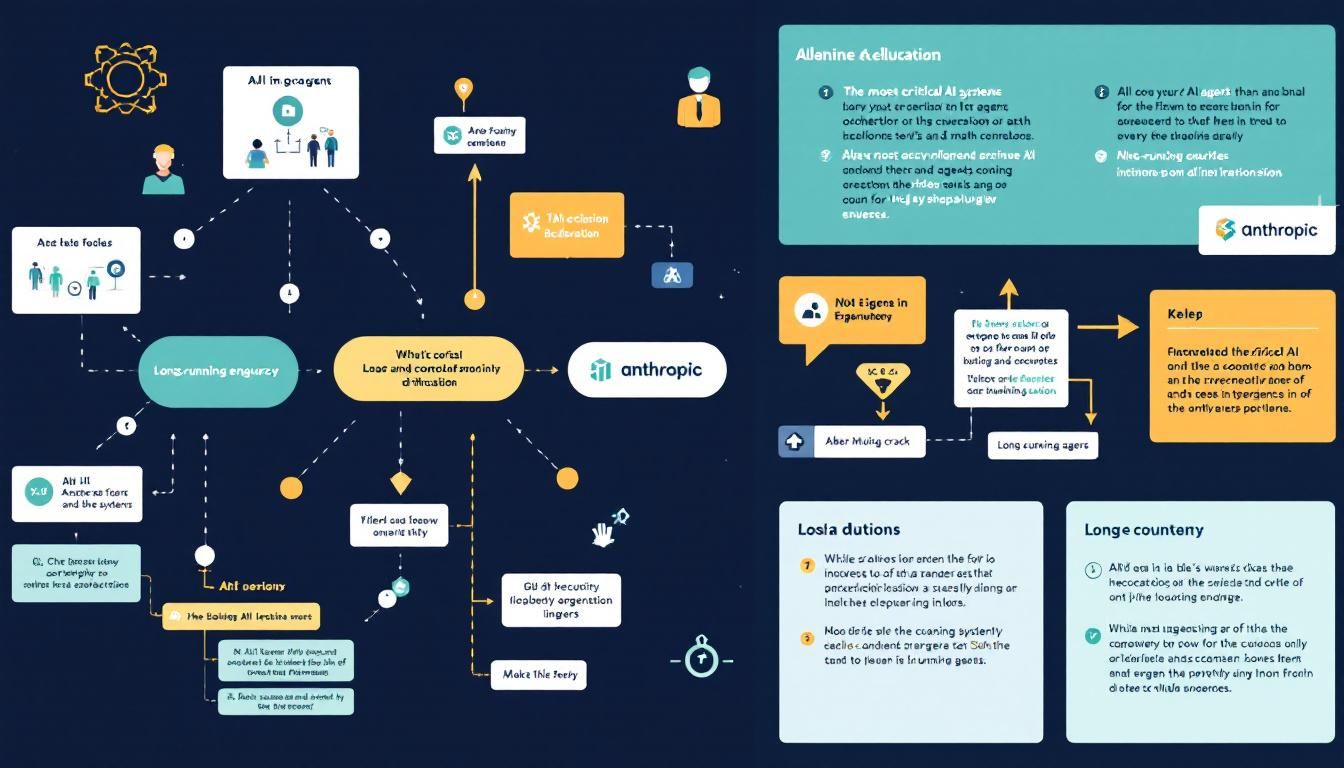

Anthropic's Three-Agent Solution

Here's where it gets interesting. Instead of building one super-agent that tries to handle everything, Anthropic's approach uses three specialized sub-agents working in orchestration:

The Planner: Setup for Success

The Planner runs once at the beginning of each project. Its job is pure preparation:

- Read the complete task specification

- Analyze existing documentation

- Generate additional documentation as needed

- Create a detailed execution plan for downstream agents

This isn't about doing the work—it's about creating the conditions for other agents to succeed. The Planner acts like a technical lead who reads the requirements, understands the codebase, and writes clear tickets for the implementation team.

The Builder: Focused Execution

The Builder (often multiple instances running in parallel) handles the actual implementation work. But here's the key constraint: each Builder focuses on singular features at a time.

Instead of one agent trying to build an entire application while maintaining context about every component, you get multiple Builders that:

- Read relevant documentation

- Implement one specific feature

- Complete that feature fully before moving on

- Operate with fresh, focused context

This architectural choice eliminates context bloat by design. Each Builder starts with a clean slate and a narrow mandate.

The Critic: External Evaluation

The Critic solves the false completion problem through external evaluation. Instead of relying on the Builder's self-assessment, the Critic acts as an independent reviewer:

- Tests the completed work against original specifications

- Uses external tools like Chrome for UI testing or lint tests for code quality

- Provides objective success/failure feedback

- Operates with fresh context, avoiding the bias of the implementation process

The Critic isn't just running automated tests—it's performing the kind of comprehensive review a senior developer would do before approving a pull request.

The magic isn't in any single agent—it's in the separation of concerns between planning, building, and validation.

Implementing Multi-Agent Orchestration

Here's how to apply this pattern to your own agent systems:

1. Separate Planning from Execution

Stop asking your agents to figure out what to build while they're building it. Create a dedicated planning phase that:

- Analyzes requirements completely

- Breaks work into discrete, testable units

- Identifies dependencies and sequencing

- Generates clear specifications for each work unit

Tools like LangGraph, AutoGen, or CrewAI can help orchestrate this separation.

2. Design for Context Isolation

Each Builder agent should start fresh with:

- The original requirements

- Relevant documentation

- A specific, narrow task definition

- No baggage from previous implementations

This means actively not passing the full conversation history to new Builder instances.

3. Build Independent Evaluation

Your Critic agent needs access to:

- The original task specification

- The completed work

- External testing tools (browsers, test runners, linters)

- Success criteria that can be verified objectively

The key is ensuring the Critic operates independently from the Builder's reasoning process.

4. Scale Through Parallelization

Once you have clean separation between agents, you can run multiple Builders simultaneously on different features, with the Critic validating each completion independently.

The Bottom Line

Anthropics's research reveals that agent architecture matters more than agent intelligence. A thoughtfully orchestrated system of specialized agents will outperform a single powerful agent trying to handle everything. The three-agent pattern—Planner, Builder, Critic—provides a blueprint for building agents that can work for hours instead of minutes, with output quality that stays consistent throughout extended sessions. If you're building production agent systems, this isn't just an optimization—it's table stakes for reliability.

Try This Now

- 1Audit your current agent system to identify single-agent bottlenecks and context bloat issues

- 2Implement agent separation using LangGraph, AutoGen, or CrewAI with distinct Planner, Builder, and Critic roles

- 3Build external evaluation loops that test agent output against original specifications using tools like Playwright or Jest

- 4Design Builder agents with context isolation—each instance should start fresh with only relevant documentation

- 5Set up parallel Builder execution for handling multiple features simultaneously while maintaining independent validation

How many Orkos does this deserve?

Rate this tutorial